Limitations of Classical Computing#

There is a limit to what we can compute on our traditional computers.

Consider that in a small hospital with about 100 nurses, we want to schedule 50 nurses to work on a particular day. The total number of ways we can select those numbers are given by the formula for the binomial coefficient in the binomial theorem.

That’s a lot! Say now that we want to select the best combination of nurses, in consideration of their level of experience, the number of days they’ve been working in a row, their risk for burnout, the hospital demand for that day, etc. To simultaneously compute on all possible combinations of nurses on a classical computer, we’d need

Note

There’s a bitstring of length \(n=100\) for each combination. If nurse #40 is scheduled to work on that day then bit #40 will have the value of 1. In all, there will be exactly 50 bits with the value of 1 and 50 bits with the value 0 in each bitstring.

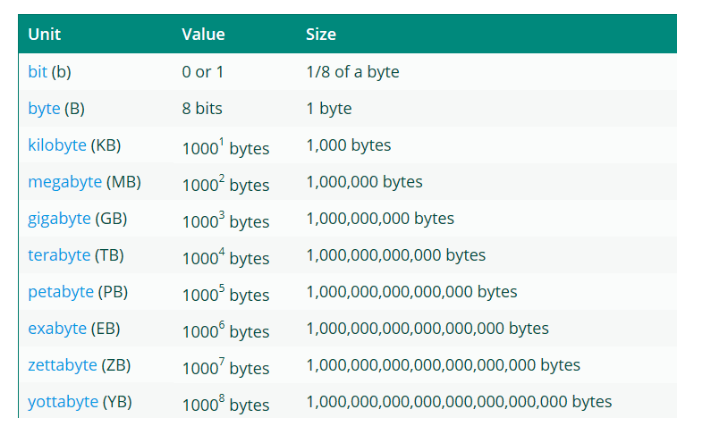

This is on the order of \(10^6 \text{ YB}\) (yottabyte), which is more than the current global data storage capacity (~ \(149 \text{ ZB}\) in 2024).

Now we may not ever need to be able to simultaneously store or compute data for a problem like this, but it gives us an example of what we’re working with. Thinking now of much larger and complex problems, like being able to model the global weather, predict new materials, or find the best drug to treat a particular disease, the amount of computational storage and power required is huge!